The evolution of computing hardware traces a path from vacuum tubes to transistors, highlighting dense, scalable architectures and predictable miniaturization. Early designs centralized power and control, then dispersed compute through personal computers and parallel systems. Software abstraction and modular design decoupled function from hardware, enabling resilient, interoperable stacks. Today, the frontier blends heterogeneous accelerators with layered memory hierarchies, signaling a shift that challenges conventional progress metrics and invites scrutiny of future co-design and investment decisions. The implications demand continued scrutiny as the landscape evolves.

How Computing Hardware Evolved: A Foundational Overview

Computing hardware has evolved through a succession of fundamental shifts in design philosophy, materials, and manufacturing capabilities that collectively enabled progressively higher performance at lower cost. This overview traces core drivers: storage bandwidth improvements and the emergence of software abstraction that decouples function from hardware, enabling scalable architectures, modular optimization, and freer experimentation within disciplined, forward-looking engineering practices.

See also: The Evolution of Cloud-Native Applications

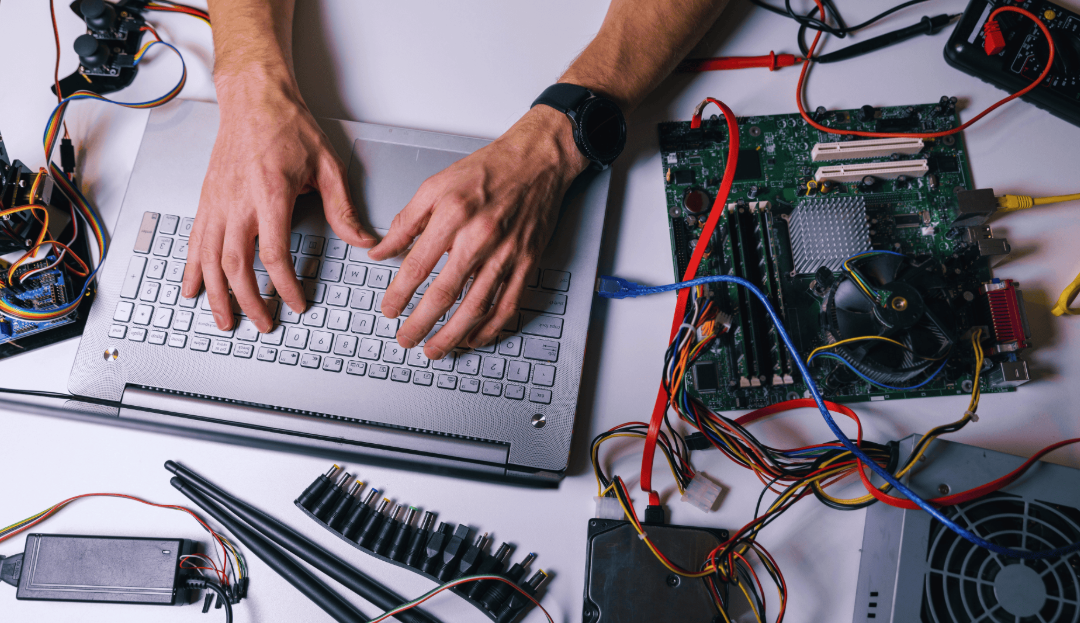

From Vacuum Tubes to Transistors: The Miniaturization Milestones

From vacuum tubes to transistors, the trajectory of miniaturization marks a decisive shift in digital logic, moving from fragile, power-hungry elements to compact, reliable devices capable of vastly higher integration.

The transition introduced scalable integration, enabling dense circuit architectures. Vacuum tubes persisted briefly as transitional relics, while transistors defined the path forward, making miniaturization milestones achievable and the future of compact computation increasingly predictable.

Architecture Shifts: Mainframes, Personal Computers, and the Rise of Parallelism

The shift from large, centralized mainframes to distributed personal computers redefined how organizations and individuals engage with computation, while the emergence of parallelism unlocked performance gains beyond single-processor limits.

Architecture evolves toward heterogeneity, embracing quantum cooling implications and neuromorphic chips as specialized accelerators. This trajectory supports scalable systems, resilience, and freedom-driven innovation, redefining efficiency, accessibility, and architectural imagination for future computing ecosystems.

The Current Frontier and What It Signals for the Next Era

The frontier now centers on integrating heterogeneous accelerators, advanced memory hierarchies, and novel substrates that extend performance and energy efficiency beyond traditional scaling. This evolution yields clear frontier implications: modular architectures, closer hardware-software co-design, and resilience to stagnation in Moore’s law.

The next era signals intensified specialization, cross-domain interoperability, and anticipatory provisioning for diverse workloads, guiding prudent investment and strategic experimentation.

Frequently Asked Questions

How Did Coding Languages Influence Hardware Design Decisions Over Time?

Coding languages influenced hardware design by shaping compiler-driven abstractions, guiding instruction sets, and prompting modular toolchains; supply chain resilience, energy density targets, runtime optimization priorities, and robust tooling ecosystems emerged to sustain flexible, forward-looking architectures.

What Were the Key Social and Economic Drivers for Hardware Shifts?

Societal and economic drivers steered hardware shifts: social drivers, economic drivers, consumer demand, globalization dynamics. The narrative unfolds with measured suspense, framing decisions as anticipatory, precise, and forward-looking, reflecting a freedom-seeking analysis of evolving capability, markets, and interconnected competition.

Which Overlooked Innovations Propelled Hardware Efficiency Earlier Decades?

Overlooked innovations propelled hardware efficiency earlier decades through undervalued memory and hidden interconnects, enabling more scalable architectures; these elements quietly accelerated data throughput, reduced latency, and improved energy efficiency, suggesting future designs should prioritize system-wide memory hierarchies and interconnect optimization.

How Does Hardware Evolution Affect Software Compatibility and Maintenance?

Bold, bridging bytes bolster bridges: hardware evolution shapes software compatibility and maintenance impact. Hardware drivers evolve, silicon vendors recalibrate, and software compatibility shifts, demanding proactive maintenance, precise updates, and freedom-driven decisions to sustain secure, scalable systems.

What Roles Do Non-Cpu Accelerators Play in Modern Systems?

Non-CPU accelerators enable high performance and power efficiency, handling specialized workloads such as AI, encoding, and graph analytics while offloading CPU tasks; they shape system architectures, drive energy-aware design, and empower scalable, freedom-minded computing strategies.

Conclusion

As computing hardware converges on a cohesive, coordinated continuum, progress propagates through modular, multilevel mechanisms. Manufacturers, researchers, and users align around adaptable architectures, accelerated by agile accelerators and memory hierarchies. The trajectory tilts toward thoughtful co-design, interoperable interfaces, and resilient, reconfigurable systems that resist stagnation. In this forward-looking frame, the frontier fuels fresh functionality, forging foundational foresight and formidable flexibility for future, faster, finer, and more faithless-free computations.